On Repeatable Internet Measurement: Part Two

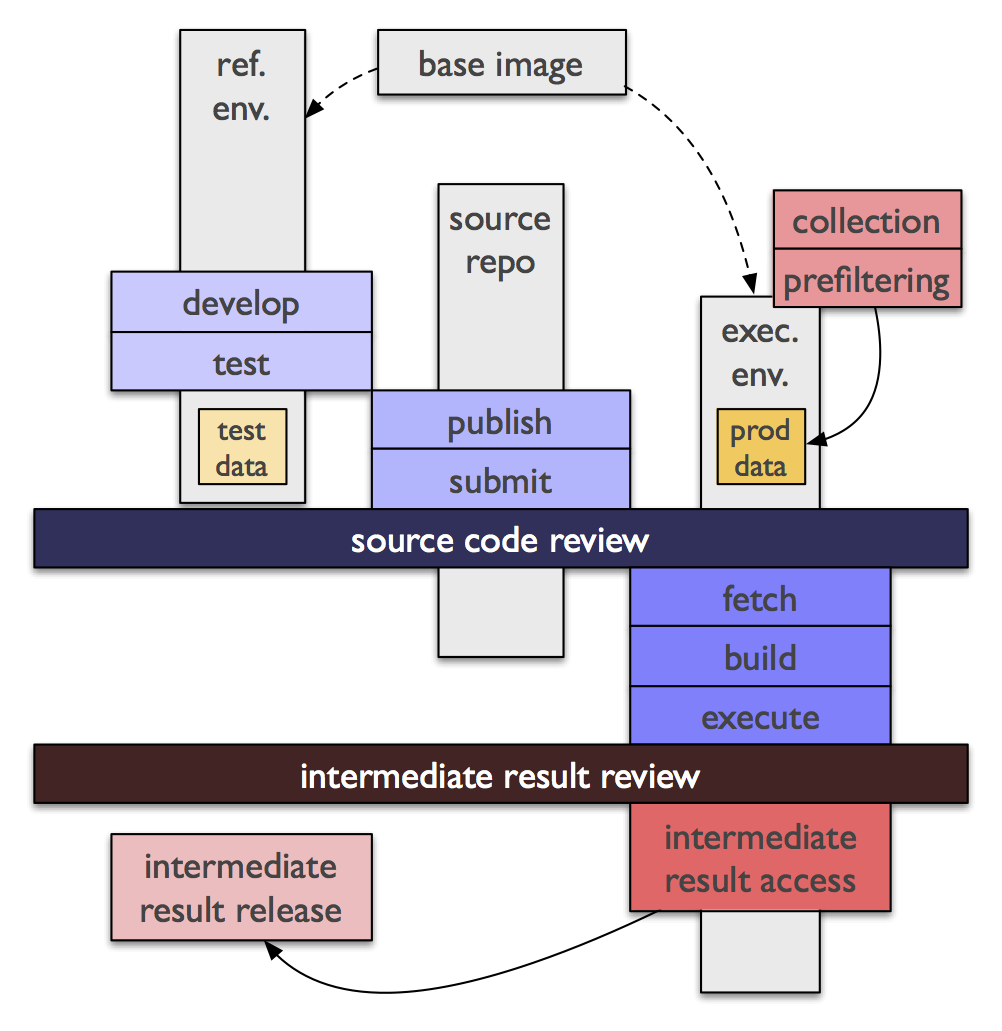

The issues identified in of part one of this post led to yet another search for solutions to the problem of making (especially passive) measurement repeatable. Of course, this has been done before, but I took as an initial principle that the social aspects of the problem must be solved socially, and worked from there. What emerged was a set of requirements and an architecture for a computing environment and set of associated administrative processes which allows analysis of network traffic data while minimizing risk to the privacy of the network’s end users as well as ensuring spatial and temporal repeatability of the experiment. For lack of a better name I decided to call an instance of a collection of data using this architecture an analysis vault. The key principle behind this architecture is if data can be open, it should be; if not, then everything else must be. ...